What's new at FAR AI

webAuthor

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: EA Forum

Organizational update from FAR AI, a nonprofit AI safety incubator; useful for understanding the landscape of AI safety institutions and research strategies as of late 2023.

Forum Post Details

Metadata

Summary

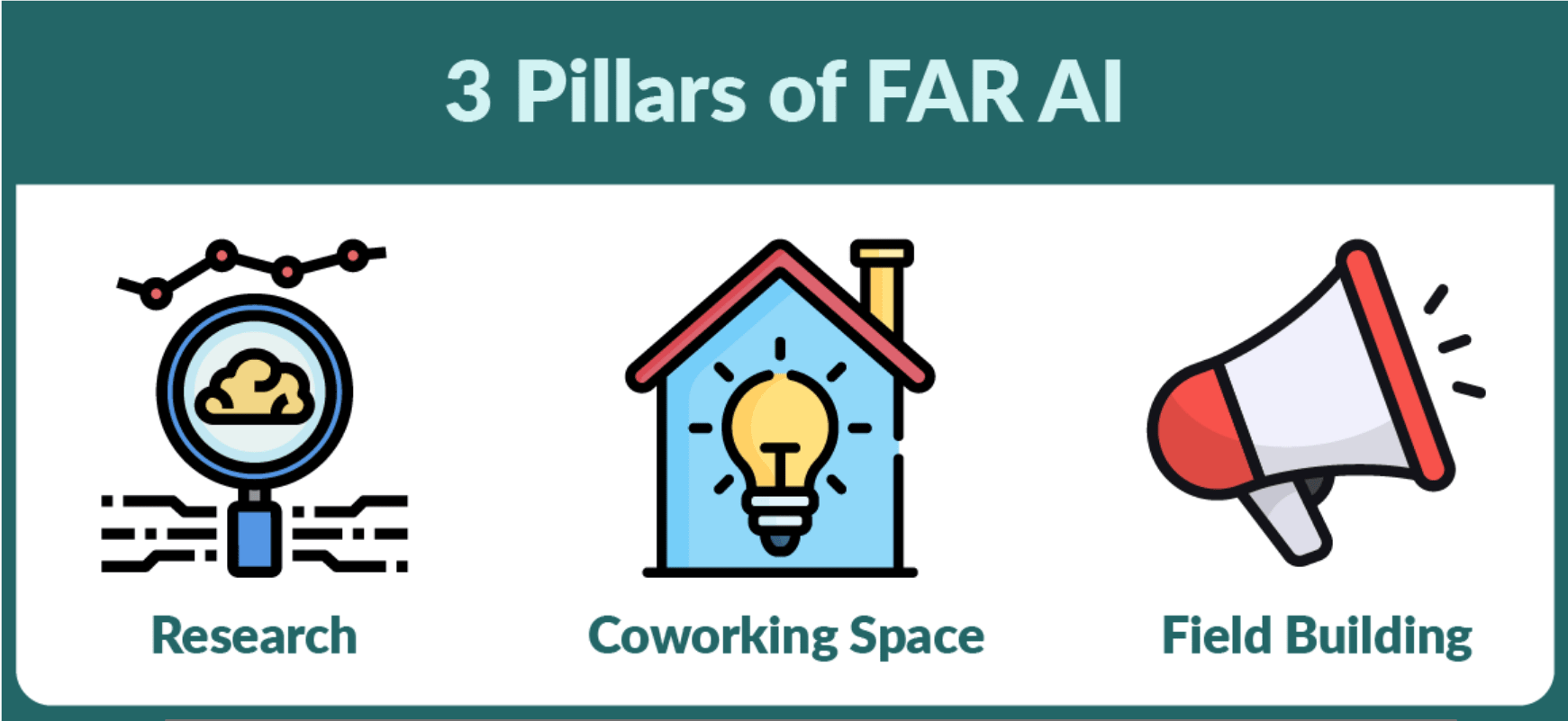

FAR AI provides an organizational update on its progress since founding in July 2022, describing its growth to 12 staff, 13 academic papers, and three core pillars: AI safety research, a Berkeley coworking space (FAR Labs), and field-building for ML researchers. The post highlights FAR's distinctive portfolio approach to safety research, targeting agendas too large for individuals but misaligned with for-profit incentives.

Key Points

- •FAR AI has grown to 12 full-time staff and produced 13 academic papers since founding in July 2022.

- •Research spans robustness, value alignment, and model evaluation using a portfolio approach rather than focusing on a single direction.

- •FAR Labs is a Berkeley coworking space hosting 40+ members, supporting the broader AI safety research community.

- •Field-building efforts include workshops and events targeting ML researchers to broaden the AI safety talent pipeline.

- •FAR positions itself as filling a gap between individual researchers and for-profit labs for promising but commercially unattractive safety agendas.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| FAR AI | Organization | 76.0 |

Cached Content Preview

# What's new at FAR AI

By AdamGleave

Published: 2023-12-04

Summary

-------

We are [FAR AI](https://far.ai): an AI safety research incubator and accelerator. Since our inception in July 2022, FAR has grown to a team of 12 full-time staff, produced 13 academic papers, opened the coworking space FAR Labs with 40 active members, and organized field-building events for more than 160 ML researchers.

Our organization consists of three main pillars:

***Research***. We rapidly explore a range of potential research directions in AI safety, scaling up those that show the greatest promise. Unlike other AI safety labs that take a bet on a single research direction, FAR pursues a diverse portfolio of projects. Our current focus areas are building a *science of robustness* (e.g. [finding vulnerabilities in superhuman Go AIs](https://far.ai/post/2023-07-superhuman-go-ais/)), finding more effective approaches to *value alignment* (e.g. [training from language feedback](https://arxiv.org/abs/2204.14146)), and *model evaluation* (e.g. [inverse scaling](https://far.ai/publication/mckenzie2023inverse/) and [codebook features](https://far.ai/post/2023-10-codebook-features/)).

***Coworking Space***. We run FAR Labs, an AI safety coworking space in Berkeley. The space currently hosts FAR, [AI Impacts](http://aiimpacts.org/), [MATS](https://www.matsprogram.org/), and several independent researchers. We are building a collaborative community space that fosters great work through excellent office space, a warm and intellectually generative culture, and tailored programs and training for members. [Applications are open](https://far.ai/labs/) to new users of the space (individuals and organizations).

***Field Building.*** We run workshops, primarily targeted at ML researchers, to help build the field of AI safety research and governance. We co-organized the [*International Dialogue for AI Safety*](https://far.ai/post/2023-10-international-dialogue/) bringing together prominent scientists from around the globe, culminating in a [public statement](https://humancompatible.ai/news/2023/10/31/prominent-ai-scientists-from-china-and-the-west-propose-joint-strategy-to-mitigate-risks-from-ai/#prominent-ai-scientists-from-china-and-the-west-propose-joint-strategy-to-mitigate-risks-from-ai) calling for global action on AI safety research and governance. We will soon be hosting the [New Orleans Alignment Workshop](https://www.alignment-workshop.com/nola-2023) in December for over 140 researchers to learn about AI safety and find collaborators.

We want to expand, so if you’re excited by the work we do, consider [donating](https://far.ai/donate/) or [working for us](https://far.ai/jobs/)! We’re hiring research engineers, research scientists and communications specialists.

Incubating & Accelerating AI Safety Research

------------------------------------

... (truncated, 13 KB total)862576e20112243d | Stable ID: sid_aLRXH7p7lB