AI Pause Will Likely Backfire (Guest Post)

webAuthor

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

A contrarian perspective within AI safety circles arguing against AI pause advocacy, relevant to debates about governance strategy, geopolitical competition, and how to best reduce existential risk from advanced AI.

Metadata

Summary

This guest post on LessWrong argues that advocating for a pause on AI development is counterproductive to AI safety goals. The author contends that pausing AI development would likely shift dominance to less safety-conscious actors, particularly authoritarian regimes, resulting in worse outcomes than continued development by safety-minded labs. The piece challenges the strategic logic underlying pause advocacy within the AI safety community.

Key Points

- •A pause on AI development could cede leadership to actors with fewer safety commitments, such as China or less scrupulous developers.

- •Slowing down safety-conscious Western labs may accelerate relative progress by adversarial or reckless actors, worsening overall risk.

- •The political feasibility and enforcement of an AI pause is questioned, suggesting it may be unachievable in practice.

- •The author argues that engagement and continued development with safety measures is preferable to a pause strategy.

- •Challenges the moral intuition behind pause advocacy by focusing on counterfactual outcomes rather than absolute development speed.

Cached Content Preview

# AI Pause Will Likely Backfire (Guest Post)

By jsteinhardt

Published: 2023-10-24

_I'm experimenting with hosting guest posts on this blog, as a way to represent additional viewpoints and especially to highlight ideas from researchers who do not already have a platform. Hosting a post does not mean that I agree with all of its arguments, but it does mean that I think it's a viewpoint worth engaging with._

_The first guest post below is by Nora Belrose. In it, Nora responds to a [recent open letter](https://futureoflife.org/open-letter/pause-giant-ai-experiments/?ref=bounded-regret.ghost.io) calling for a pause on AI development. Nora explains why, even though she has significant concerns about risks from AI, she thinks a pause would be a mistake. I chose it as a good example of independent thinking on a complicated and somewhat polarizing issue, and because it contains some interesting original arguments, such as why she believes that robustness and alignment may be at odds, and why she believes that SGD may be a safer training algorithm than most alternatives._

Should we lobby governments to impose a moratorium on AI research? Since we don’t enforce pauses on most new technologies, I hope the reader will grant that the burden of proof is on those who advocate for such a moratorium. We should only advocate for such heavy-handed government action if it’s clear that the benefits of doing so would significantly outweigh the costs.^[\[1\]](#fn1)^ In this essay, I’ll argue an AI pause would increase the risk of catastrophically bad outcomes, in at least three different ways:

1. Reducing the quality of AI alignment research by forcing researchers to exclusively test ideas on models like GPT-4 or weaker.

2. Increasing the chance of a “fast takeoff” in which one or a handful of AIs rapidly and discontinuously become more capable, concentrating immense power in their hands.

3. Pushing capabilities research underground, and to countries with looser regulations and safety requirements.

Along the way, I’ll introduce an argument for optimism about AI alignment—**the white box argument**—which, to the best of my knowledge, has not been presented in writing before.

Feedback loops are at the core of alignment

===========================================

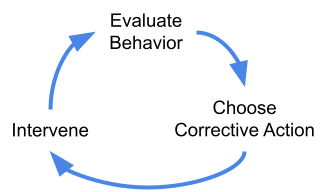

Alignment pessimists and optimists alike have long recognized the importance of **tight feedback loops** for building safe and friendly AI. Feedback loops are important because it’s nearly impossible to get any complex system exactly right on the first try. Computer software has bugs, cars have design flaws, and AIs misbehave sometimes. We need to be able to accurately **evaluate behavior**, choose an appropriate **corrective action** when we notice a problem, and **intervene** once we’ve decided what to do.

Imposing a pause breaks this feedback loop by forcing alignment researchers to test their ideas on models no more powerf

... (truncated, 30 KB total)8a5243abd4b47485 | Stable ID: sid_lWsGrxU49L