Situational Awareness: A One-Year Retrospective

webAuthor

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

This LessWrong post revisits Leopold Aschenbrenner's widely-circulated 2024 'Situational Awareness' document, which argued AGI was imminent and framed AI as a national security priority; the retrospective is valuable for calibrating AI timelines forecasting and evaluating high-profile prediction track records.

Metadata

Summary

A retrospective analysis of Leopold Aschenbrenner's influential 'Situational Awareness' essay one year after its publication, evaluating which predictions and claims held up and which did not. The post likely assesses forecasts about AI capabilities timelines, compute scaling, and geopolitical competition in light of developments since the original piece. It serves as a calibration exercise for high-stakes AI forecasting.

Key Points

- •Reviews the accuracy of major predictions from the original Situational Awareness essay roughly one year after publication

- •Evaluates claims about AI capabilities trajectories, scaling trends, and competitive dynamics between AI labs

- •Assesses geopolitical and national security framing of AI development that was central to the original essay

- •Provides a model for how to hold AI forecasters accountable and update beliefs based on evidence

- •Offers insight into which aspects of rapid AI progress were over- or under-estimated

Cached Content Preview

# Situational Awareness: A One-Year Retrospective

By Nathan Delisle

Published: 2025-06-23

tl;dr: Many critiques of \*Situational Awareness\* have been qualitative; one year later we can check the numbers. I did my best to verify his claims using public data through June 2025, and found that his estimates mostly check out.

Thanks to Kai Williams & Egg Syntax for their critical feedback.

**Abstract**

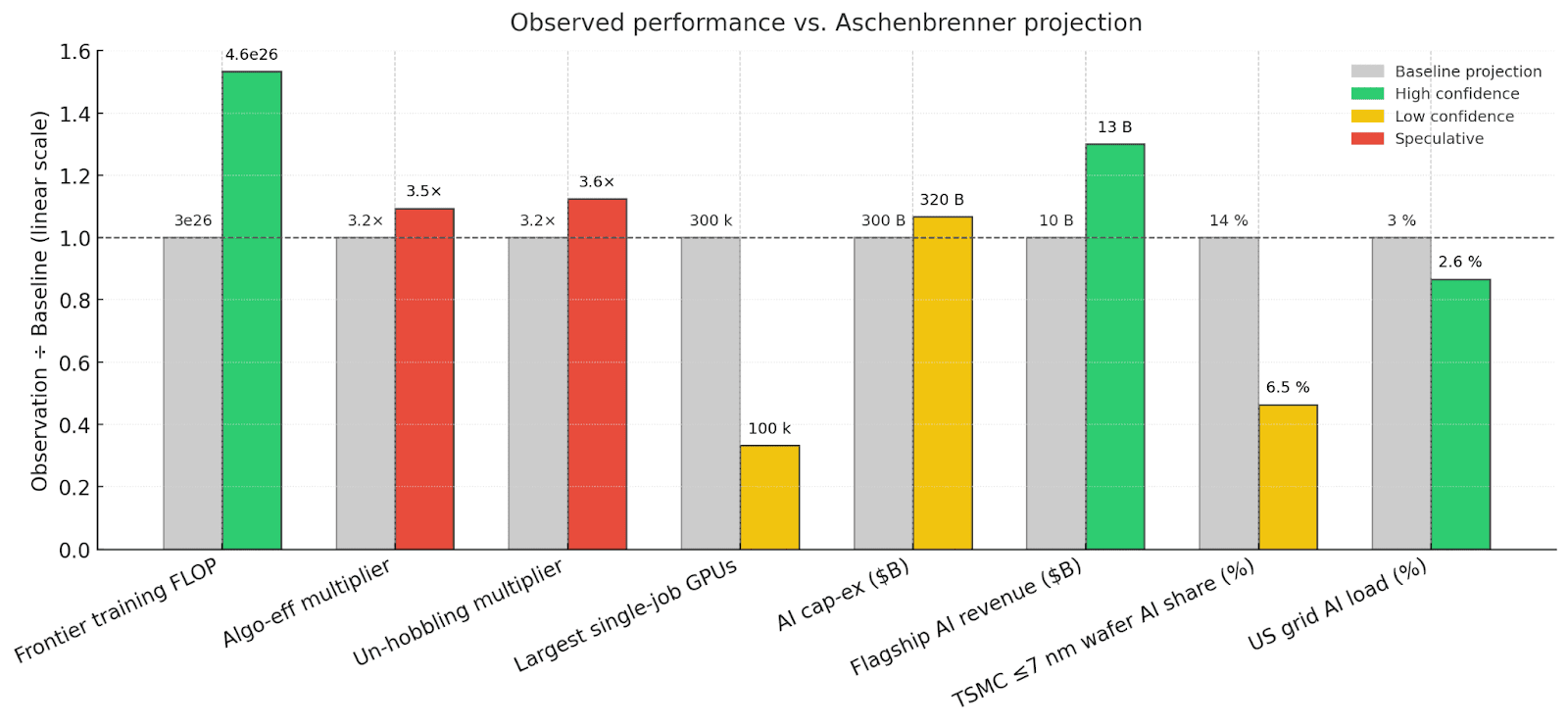

Leopold Aschenbrenner’s 2024 essay Situational Awareness forecasts AI progress from 2024 to 2027 in two groups: "drivers" (raw compute, algorithmic efficiency, and post-training capability enhancements known as "un-hobbling") and "indicators" (largest training cluster size, global AI investment, chip production, AI revenue, and electricity consumption).[^lgzy1wqyn0s] Drivers and the largest cluster size are expected to grow about half an order of magnitude (≈3.2×) annually, infrastructure indicators roughly doubling annually (2× per year), with AI revenue doubling every six months (≈4× per year).[^hqyijeeuuqp]

Using publicly available data as of June 2025, this audit finds that global AI investment, electricity consumption, and chip production follow Aschenbrenner’s forecasts. Compute, algorithmic efficiency, and unhobbling gains seem to follow Aschenbrenner’s forecasts as well, although with more uncertainty. xAI’s Grok 3 exceeds expectations by about one-third of an order of magnitude.[^319v4a02wq] However, recent OpenAI and Anthropic models trail raw-compute trends by about one-third to one-half an order of magnitude,[^aro4araohcs] and AI-related revenue growth is several months behind. Overall, Aschenbrenner’s predicted pace of roughly half an order-of-magnitude annual progress is ~roughly supported by available evidence.

Graph. At-a-glance scoreboard.

**Introduction**

“It is strikingly plausible that by 2027, models will be able to do the work of an AI researcher/engineer. That doesn’t require believing in sci-fi; it just requires believing in straight lines on a graph.” — Leopold Aschenbrenner

AI forecasts, such as the AI Futures Project’s modal scenario that reaches artificial super-intelligence by 2027,[^ligo7fcmgwo] have generated a lot of debate recently. These forecasts often implicitly rely on extrapolation of recent trends, an approach that critics claim overlooks constraints that could slow progress. Leopold Aschenbrenner’s 2024 essay, Situational Awareness, frames its timeline using quantitative forecasts, providing a concrete basis to evaluate these critiques empirically, at least on a one-year timeline. Aschenbrenner organizes his forecasts into two goups:

* Drivers: Measures directly tied to improving model capabilities, specifically raw compute used for frontier-model training, algorithmic efficiency gains, and additional post-training enhancement

... (truncated, 33 KB total)9fa59eab5230c8b2 | Stable ID: sid_hbejAQXahZ