Toss a bitcoin to your Lightcone - LessWrong

blogAuthor

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

A fundraising appeal for Lightcone Infrastructure, the nonprofit behind core AI safety community platforms; relevant for understanding the organizational and financial ecosystem supporting AI safety research and discourse.

Forum Post Details

Metadata

Summary

Lightcone Infrastructure is seeking approximately $2 million in individual donations to sustain operations through 2026, covering LessWrong, the AI Alignment Forum, and the Lighthaven research campus. The post argues that institutional funders have withdrawn support due to political constraints, making grassroots funding critical. Lightcone frames its infrastructure work as highly cost-effective support for the AI safety and existential risk communities.

Key Points

- •Lightcone Infrastructure operates LessWrong, the AI Alignment Forum, and Lighthaven campus in Berkeley — key infrastructure for the AI safety community.

- •Fundraising goal is ~$2 million to cover operations through 2026, targeting individual donors after reduced institutional support.

- •Institutional funders allegedly reduced contributions due to political constraints, not concerns about effectiveness or impact.

- •The post argues Lightcone's coordination and knowledge-sharing infrastructure is among the most cost-effective investments in long-term human survival.

- •Individual donations are framed as especially valuable given their independence from political pressures facing large institutional funders.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| Lighthaven (Event Venue) | Organization | 40.0 |

Cached Content Preview

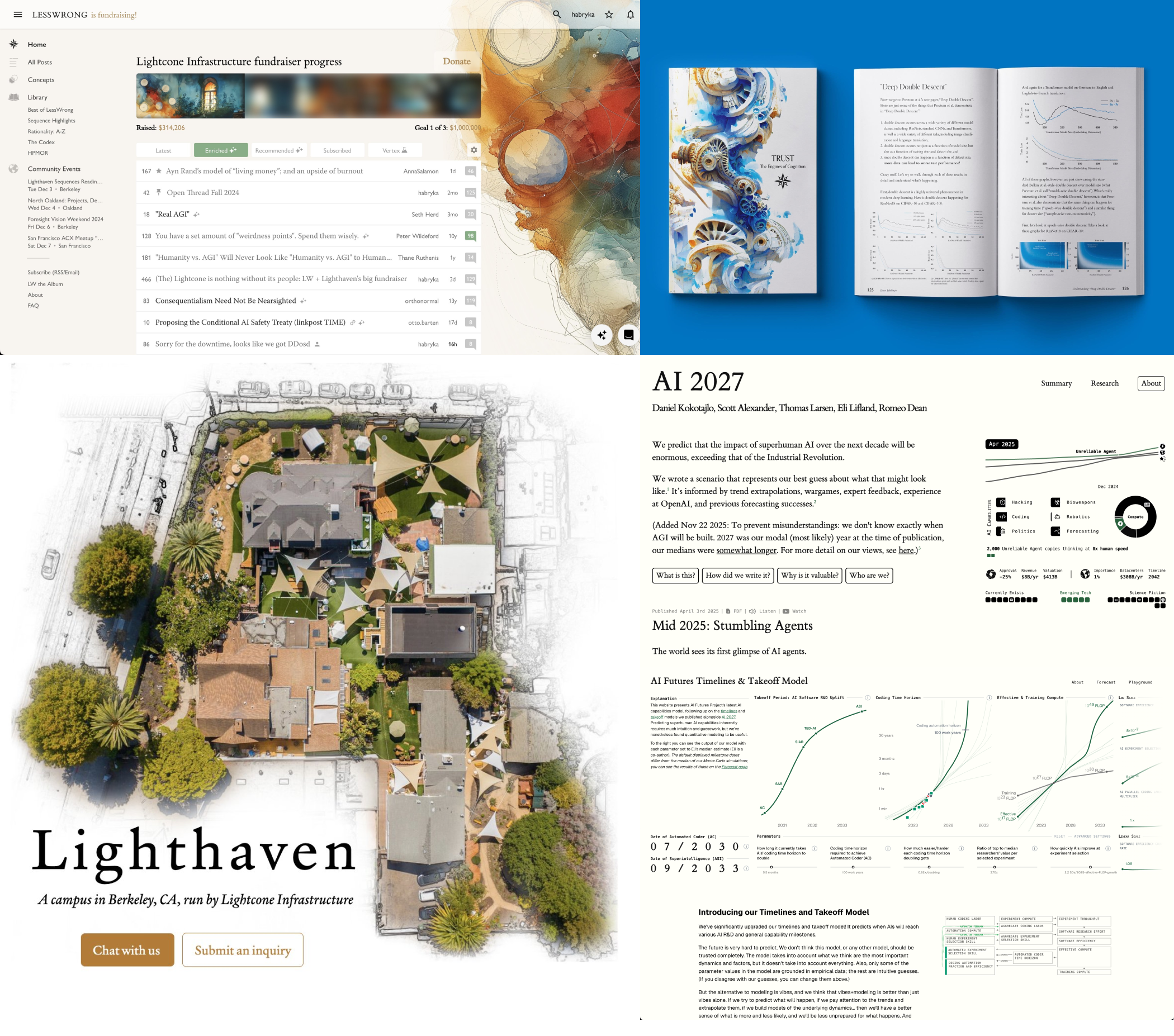

# Toss a bitcoin to your Lightcone – LW + Lighthaven's 2026 fundraiser

By habryka

Published: 2025-12-13

**TL;DR**: Lightcone Infrastructure, the organization behind LessWrong, Lighthaven, the AI 2027 website, the AI Alignment Forum, and many other things, needs about $2M to make it through the next year. [Donate directly,](https://lightconeinfrastructure.com/donate) send me an [email](mailto:habryka@lesswrong.com), [DM](https://www.lesswrong.com/users/habryka4), signal message (+1 510 944 3235), or leave a public comment on this post if you want to support what we do. We are a registered 501(c)3 and are IMO the best bet you have for converting money into good futures for humanity.

* * *

We build beautiful infrastructure for truth-seeking and world-saving.

The infrastructure we've built over the last 8 years coordinates and facilitates much of the (unfortunately still sparse) global effort that goes into trying to make humanity's long-term future go well. Concretely, we:

* build and run[ LessWrong.com](https://www.lesswrong.com/) and the[ AI Alignment Forum](https://www.alignmentforum.org/)

* build and run[ Lighthaven](https://www.lighthaven.space/), a ~30,000 sq. ft. campus in downtown Berkeley where we host conferences, researchers, and various programs dedicated to making humanity's future go better

* designed and built the websites for[ AI 2027](https://ai-2027.com/),[ *If Anyone Builds It, Everyone Dies*](https://ifanyonebuildsit.com/),[ AI Lab Watch](https://ailabwatch.org/), and other public communication projects

* act as leaders of the rationality, AI safety, and existential risk communities. We run conferences ([less.online](https://less.online/)) and residencies ([inkh](https://www.inkhaven.blog/)[ave](https://www.inkhaven.blog/about)[n.blog](https://www.inkhaven.blog/)), participate in discussions on various community issues, notice and try to fix bad incentives, build[ grantmaking](https://lightspeedgrants.org/)[ infrastructure](https://survivalandflourishing.fund/s-process), help people who want to get involved, and lots of other things.

In general, we try to take responsibility for the end-to-end effectiveness of these communities. If there is some kind of coordination failure, or part of the engine of impact that is missing, I aim for Lightcone to be an organization that jumps in and fixes that, whatever it is.

As far as I can tell, the vast majority of people who have thought seriously about how to reduce existential risk (and have evaluated Lightcone as a donation target) think we are highly cost-effective, including all institutional funders who have previously granted to us[^xxw41li7awn]. Many of those historical institutional funders are no longer funding us, or are funding us much less, not because they think we are no longer cost-effective, but because of political or institutional constraints on

... (truncated, 98 KB total)c5e45281e75ebd67 | Stable ID: sid_0BemadnYQd