Back

Relationship between EA Community and AI safety

webAuthor

Tom Barnes🔸

Credibility Rating

3/5

Good(3)Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: EA Forum

A 2022-era EA Forum discussion piece reflecting community debate about whether AI safety should remain closely tied to EA or develop as a standalone field; useful for understanding the sociological dynamics of the AI safety ecosystem.

Forum Post Details

Karma

157

Comments

15

Forum

eaforum

Forum Tags

CommunityAI safetyBuilding effective altruismBuilding the field of AI safety

Metadata

Importance: 35/100blog postcommentary

Summary

Tom Barnes examines the growing entanglement between the Effective Altruism community and AI safety, questioning whether continued convergence is desirable. He argues the ideal outcome would be for AI safety to mature as an independent field within AI/ML communities, while EA refocuses on its broader mission and other cause areas.

Key Points

- •AI safety has grown substantially within EA, but the two communities may benefit from diverging rather than further converging.

- •Barnes proposes AI safety should develop independently within mainstream AI/ML communities, analogous to how EA relates to global health or animal welfare.

- •EA's identity and effectiveness may be diluted if it becomes too closely identified with a single cause area like AI safety.

- •The post raises open questions about institutional and community design, inviting discussion on EA's future strategic direction.

- •Separation could help AI safety gain broader legitimacy and reach beyond the EA ecosystem.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| EA and Longtermist Wins and Losses | -- | 53.0 |

Cached Content Preview

HTTP 200Fetched Apr 10, 20262 KB

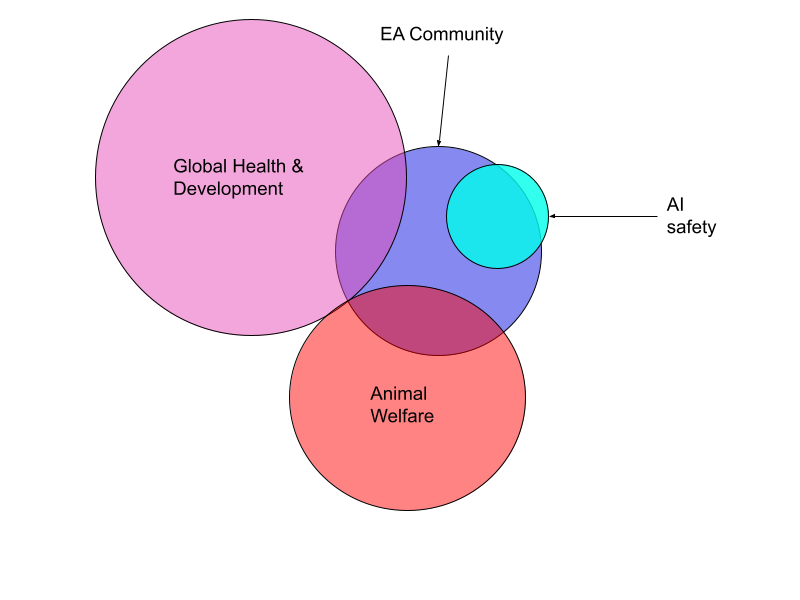

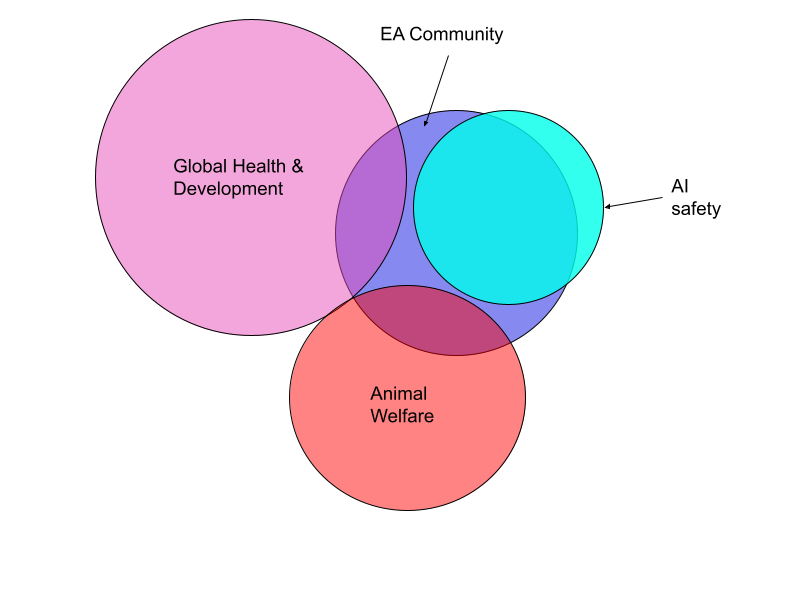

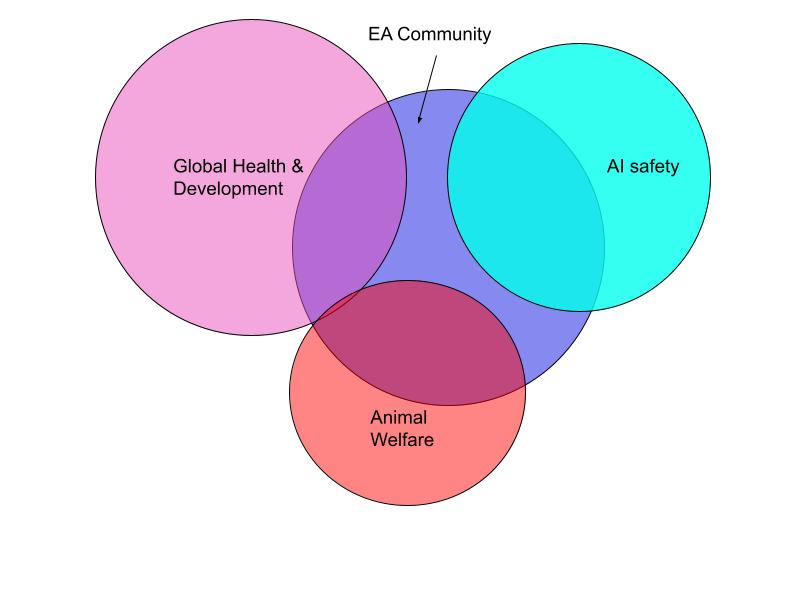

# Relationship between EA Community and AI safety By Tom Barnes🔸 Published: 2023-09-18 *Personal opinion only. Inspired by filling out the *[*Meta coordination forum*](https://forum.effectivealtruism.org/posts/33o5jbe3WjPriGyAR/announcing-the-meta-coordination-forum-2023#How_You_Can_Help)[*survey*](https://docs.google.com/forms/d/e/1FAIpQLSf4bKvDVwb7OxSuUfwOyx5_7pIXmuCTlDyWaVhZLgniH9g59g/viewform?usp=sf_link). *Epistemic status: Very uncertain, rough speculation. I’d be keen to see more public discussion on this question* One open question about the EA community is it’s relationship to AI safety (see e.g. [MacAskill)](https://forum.effectivealtruism.org/posts/euzDpFvbLqPdwCnXF/university-ea-groups-need-fixing?commentId=Bi3cPKt27bF9GNMJf). I think the relationship EA and AI safety (+ GHD & animal welfare) previously looked something like this (up until 2022ish):[^gpm1r83e52k]  With the growth of AI safety, I think the field now looks something like this:  It's an open question whether the EA Community should further grow the AI safety field, or whether the EA Community should become a distinct field from AI safety. I think my preferred approach is something like: EA and AI safety grow into new fields rather than into eachother: * AI safety grows in AI/ML communities * EA grows in other specific causes, as well as an “EA-qua-EA” movement. As an ideal state, I could imagine the EA community being in a similar state w.r.t AI safety that it currently has in animal welfare or global health and development.  However I’m very uncertain about this, and curious to here what other people’s takes are. [^gpm1r83e52k]: I’ve ommited non-AI longtermism, along with other fields, for simplicity. I strongly encourage not interpreting these diagrams too literally

Resource ID:

e0c9049daf6bcd60 | Stable ID: sid_51UMRJOnRt