MATS Alumni Impact Analysis

webAuthors

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: LessWrong

MATS (Machine Learning for Alignment Taskforce) is a structured mentorship and research program; this retrospective offers empirical data on the effectiveness of AI safety field-building programs, useful for evaluating similar training and fellowship initiatives.

Forum Post Details

Metadata

Summary

A survey-based impact analysis of 72 alumni (46% response rate) from MATS program cohorts Winter 2021-22 through Summer 2023, showing strong alignment field engagement: 78% working on AI alignment, 68% publishing alignment research, and 63% meeting research collaborators through the program. The report provides evidence that MATS effectively builds career capital and facilitates research collaboration for early-career AI safety professionals.

Key Points

- •78% of respondents work on AI alignment/control or conduct independent alignment research, with 49% in formal alignment roles.

- •54% of alumni who applied to jobs advanced past the first round of interviews, with 64% of those accepting job offers.

- •68% published alignment research, with 78% attributing their publication at least partly to MATS participation.

- •63% of alumni met a research collaborator through MATS, addressing a key bottleneck for early-career researchers.

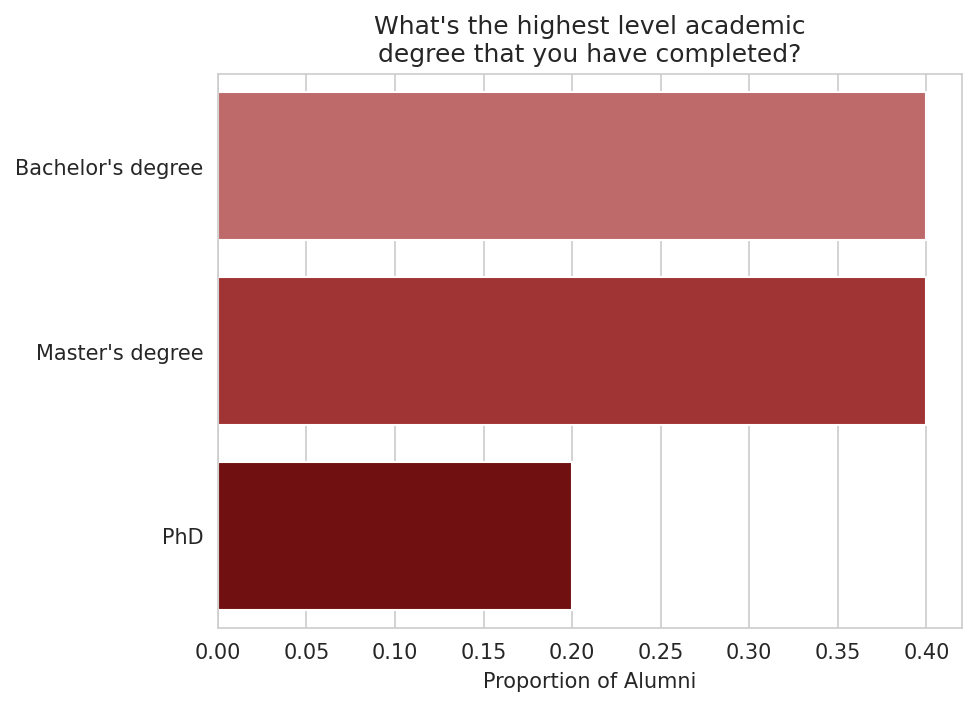

- •Alumni backgrounds: 40% bachelor's, 40% master's, 20% PhD—demonstrating MATS serves researchers at multiple career stages.

Cited by 1 page

| Page | Type | Quality |

|---|---|---|

| MATS ML Alignment Theory Scholars program | Organization | 60.0 |

Cached Content Preview

# MATS Alumni Impact Analysis

By utilistrutil, Juan Gil, yams, LauraVaughan, K Richards, Ryan Kidd

Published: 2024-09-30

Summary

=======

This winter, [MATS](https://www.matsprogram.org/) will be running our seventh program. In early-mid 2024, 46% of alumni from our first four programs (Winter 2021-22 to Summer 2023) completed a survey about their career progress since participating in MATS. This report presents key findings from the responses of these 72 alumni.

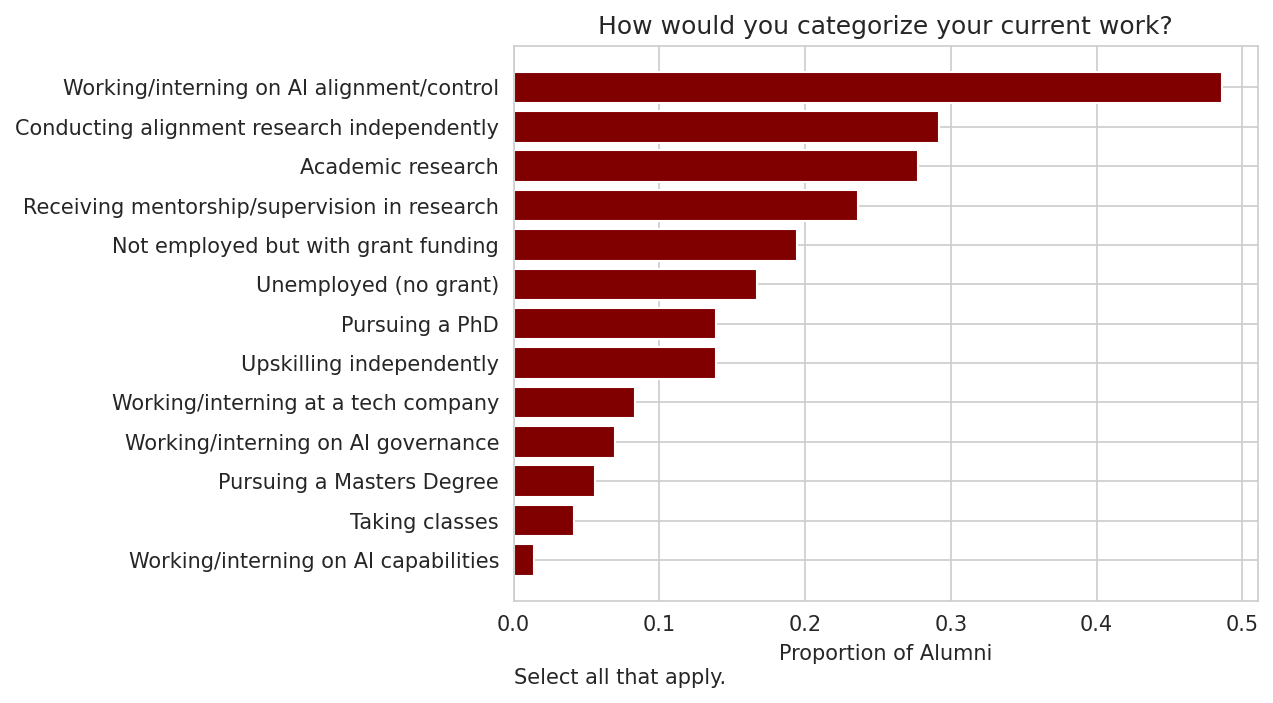

* 78% of respondents described their current work as "Working/interning on AI alignment/control" or "Conducting alignment research independently."

* 49% are "Working/interning on AI alignment/control."

* 29% are "Conducting alignment research independently."

* 1.4% are "Working/interning on AI capabilities."

* Since MATS, 54% of respondents applied to a job and advanced past the first round of interviews.

* 64% of those who shared more details accepted a job offer.

* Alumni reported that MATS made it more likely that they applied to these jobs by helping them build legible career capital and develop research/technical skills.

* During or since MATS, 68% of alumni had published alignment research.

* The most common type of publication was a LessWrong post (45%).

* 78% of respondents said their publication “possibly” or “probably” would not have happened without MATS.

* 10% of alumni reported that MATS accelerated publication by more than 6 months; 14% said 1-6 months.

* 8% of alumni responded that MATS resulted in a “much higher” quality of their publication.

* 63% of scholars met a research collaborator through MATS

* At this stage in their careers, 46% of alumni would benefit from more connections to research collaborators, and 39% would benefit from job recommendations.

Background on Cohort

====================

For 40% of respondents, their highest academic degree was a Bachelor’s; 40% had earned at most a Master’s, and 20%, a PhD.

Their most common categories of current work were “Working/interning on AI alignment/control” (49%) and “Conducting alignment research independently” (29%).

Here are some representative descriptions of the work alumni were doing:

* “Going through the first year of grad school at Oxford and continuing research that emerged from my time at MATS.”

* “Working on an interpretability project at AI Safety Camp and just finished the s-risk intro fellowship by CLR a week or two ago.”

* “What could be called "prosaic agent founda

... (truncated, 25 KB total)34adf176d8299b24 | Stable ID: sid_AU2VHncdOi