The Practical Value of Flawed Models: A Response to titotal’s AI 2027 Critique

webAuthor

Credibility Rating

Good quality. Reputable source with community review or editorial standards, but less rigorous than peer-reviewed venues.

Rating inherited from publication venue: EA Forum

A response post in an ongoing EA Forum debate about the AI 2027 scenario document; relevant to discussions about how the AI safety community should evaluate and use speculative forecasting frameworks.

Metadata

Summary

This EA Forum post defends the AI 2027 scenario/forecast against critiques by titotal, arguing that even imperfect or speculative models of AI development trajectories have significant practical value for planning and decision-making. The author contends that dismissing flawed forecasting frameworks throws away useful signal along with the noise. The piece explores how decision-makers and researchers should engage with uncertain, potentially inaccurate models rather than rejecting them outright.

Key Points

- •Imperfect forecasting models of AI development (like AI 2027) still provide actionable guidance even when specific predictions are wrong or uncertain.

- •The critique that a model is 'flawed' does not necessarily diminish its practical utility for orienting research, policy, and safety work.

- •Scenario planning and speculative timelines help coordinate community attention and resources even absent certainty about outcomes.

- •Engages directly with titotal's specific objections to AI 2027, offering counterarguments about the epistemics of AI forecasting.

- •Raises broader questions about standards of evidence and usefulness in AI safety forecasting discourse.

Cached Content Preview

# The Practical Value of Flawed Models: A Response to titotal’s AI 2027 Critique

By Michelle_Ma

Published: 2025-06-25

Crossposted from my [Substack](https://bullishlemon.substack.com/p/ai-forecastings-catch-22-the-practical).

[@titotal](https://forum.effectivealtruism.org/users/titotal?mention=user) recently posted an [in-depth critique](https://titotal.substack.com/p/a-deep-critique-of-ai-2027s-bad-timeline) of AI 2027. I'm a fan of his work, and this post was, as expected, phenomenal*.

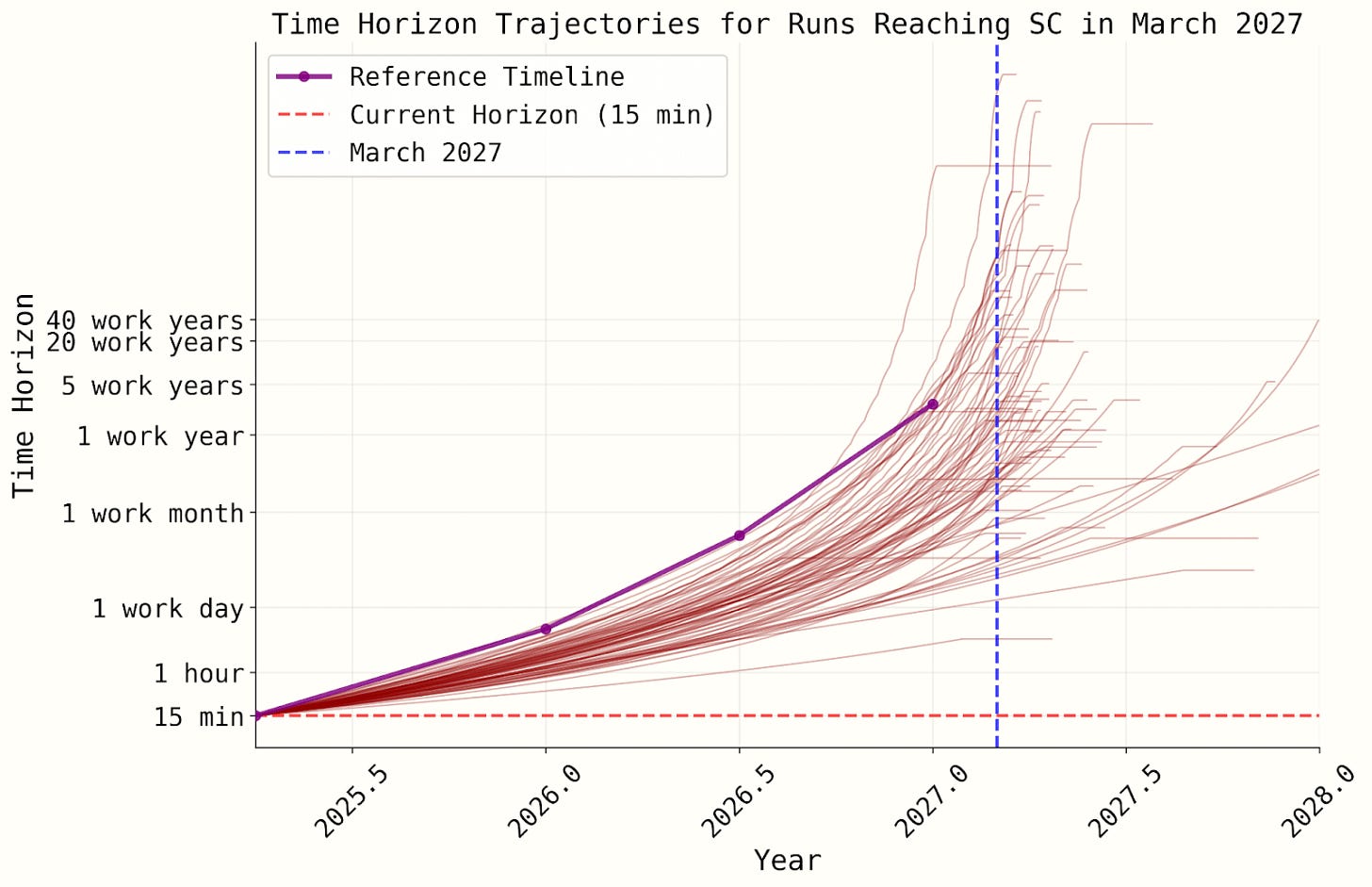

Much of the critique targets the unjustified weirdness of the superexponential time horizon growth curve that underpins the AI 2027 forecast. During my own quick excursion into the Timelines simulation code, I set the probability of superexponential growth to ~0 because, yeah, it seemed pretty sus. But I didn’t catch (or write about) the full extent of its weirdness, nor did I identify a bunch of other issues titotal outlines in detail. For example:

* The AI 2027 authors assign ~40% probability to a “superexponential” time horizon growth curve that shoots to infinity in a few years, regardless of your starting point.

* The RE-Bench logistic curve (major part of their second methodology) is never actually used during the simulation. As a result, the simulated saturation timing diverges significantly from what their curve fitting suggests.

* The curve they’ve been showing to the public doesn’t match the one actually used in their simulations.

[](https://substackcdn.com/image/fetch/$s_!Yk4S!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe44e0cd6-2164-4c0c-a15a-5a8ebc4ed894_1600x1034.webp)

…And more. He’s also been in communication with the AI 2027 authors, and Eli Lifland recently released an [updated model](https://ai-2027.com/research/timelines-forecast#2025-may-7-update) that improves on some of the identified issues. Highly recommend reading the [whole thing](https://www.lesswrong.com/posts/PAYfmG2aRbdb74mEp/a-deep-critique-of-ai-2027-s-bad-timeline-models#Conclusion)!

That said—it is phenomenal*, with an asterisk. It *is* phenomenal in the sense of being a detailed, thoughtful, and in-depth investigation, which I greatly appreciate since AI discourse sometimes sounds like: “a-and then it’ll be super smart, like ten-thousand-times smart, and smart things can do, like, anything!!!” So the nitty-gritty analysis is a breath of fresh air.

But while titotal is appropriately uncertain about the *technical* timelines, he seems way more confident in his *philosophical* conclusions. At the end of the post, he emphatically concludes that forecasts like AI 2027 shouldn’t be popularized because, in practice, they influence people to make serious life decisions based on wha

... (truncated, 12 KB total)9255dd6474759ef0