Back

Credibility Rating

4/5

High(4)High quality. Established institution or organization with editorial oversight and accountability.

Rating inherited from publication venue: METR

Data Status

Not fetched

Cited by 33 pages

Cached Content Preview

HTTP 200Fetched Feb 27, 202616 KB

[](https://metr.org/)

- [Research](https://metr.org/research)

- [Notes](https://metr.org/notes)

- [Updates](https://metr.org/blog)

- [About](https://metr.org/about)

- [Donate](https://metr.org/donate)

- [Careers](https://metr.org/careers)

Menu

Model Evaluation & Threat Research

METR conducts research and evaluations to improve public understanding of the capabilities and risks of frontier AI systems.

[Our research](https://metr.org/research) [Careers](https://metr.org/careers)

We’ve worked with

[202020212022202320242025030 min1 hour2 hours3 hours4 hours5 hours6 hours7 hours8 hours9 hours10 hours11 hours12 hours13 hours14 hours15 hoursFix complex bug in ML research codebaseExploit a vulnerable Ethereum smart contractTrain adversarially robust image modelExploit a buffer overflow in libiec61850Fix bugs in small Python librariesGPT-2GPT-3GPT-3.5GPT-4GPT-4 Nov '23Claude 3 OpusGPT-4 TurboGPT-4oClaude 3.5 Sonnet (Old)o1-previewClaude 3.5 Sonnet (New)o1Claude 3.7 Sonneto3Claude Opus 4Claude Opus 4.1GPT-5Gemini 3 ProGPT-5.1-Codex-MaxClaude Opus 4.5GPT-5.2 (high)ClaudeOpus 4.6GPT-5.3-Codex (high)](https://metr.org/time-horizons/)

Time Horizon 1.1 (Current)TH 1.1

Time Horizon 1.1 (Current)

Follows the same methodology described in the initial paper, but with a larger task suite. See [release announcement.](https://metr.org/blog/2026-1-29-time-horizon-1-1/)

Time Horizon 1.0 (Mar 2025)

Original time horizon computations. Calculated for models from 2019 through Nov 2025, following the methods described in the original time horizon paper.

Log ScaleLinear Scale

50% Success80% Success

[**Task-Completion Time Horizons of Frontier AI Models** \\

We propose measuring AI performance in terms of the length of software tasks AI agents can complete. We show an exponential increase in this time horizon metric over the past 6 years.](https://metr.org/time-horizons/)

[Read paper](https://arxiv.org/abs/2503.14499) [View repo ](https://github.com/METR/eval-analysis-public)

## Featured research

Our AI evaluations research focuses on assessing broad autonomous capabilities and the ability of AI systems to accelerate AI R&D. We also study potential AI behavior that threatens the integrity of evaluations and mitigations for such behavior.

[View all research](https://metr.org/research)

- [General](https://metr.org/#research-for-the-public)

- [Technical](https://metr.org/#research-for-researchers)

- [Policy](https://metr.org/#research-for-policy-researchers)

- [View all research](https://metr.org/research)

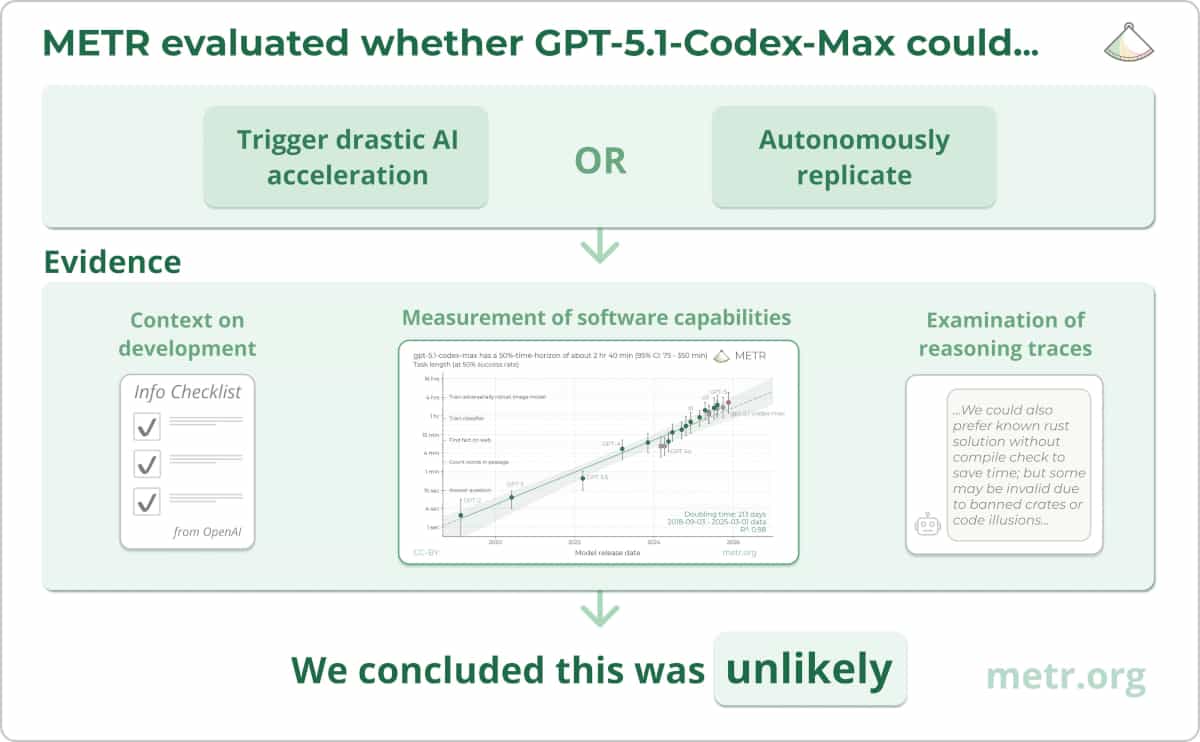

### GPT-5.1 Evaluation Results

We evalua

... (truncated, 16 KB total)Resource ID:

45370a5153534152 | Stable ID: NWFiOTg0MD